🧠 AI Engineering Lessons from Building a Local LLM App (Part 5)

Sun Jan 18 2026

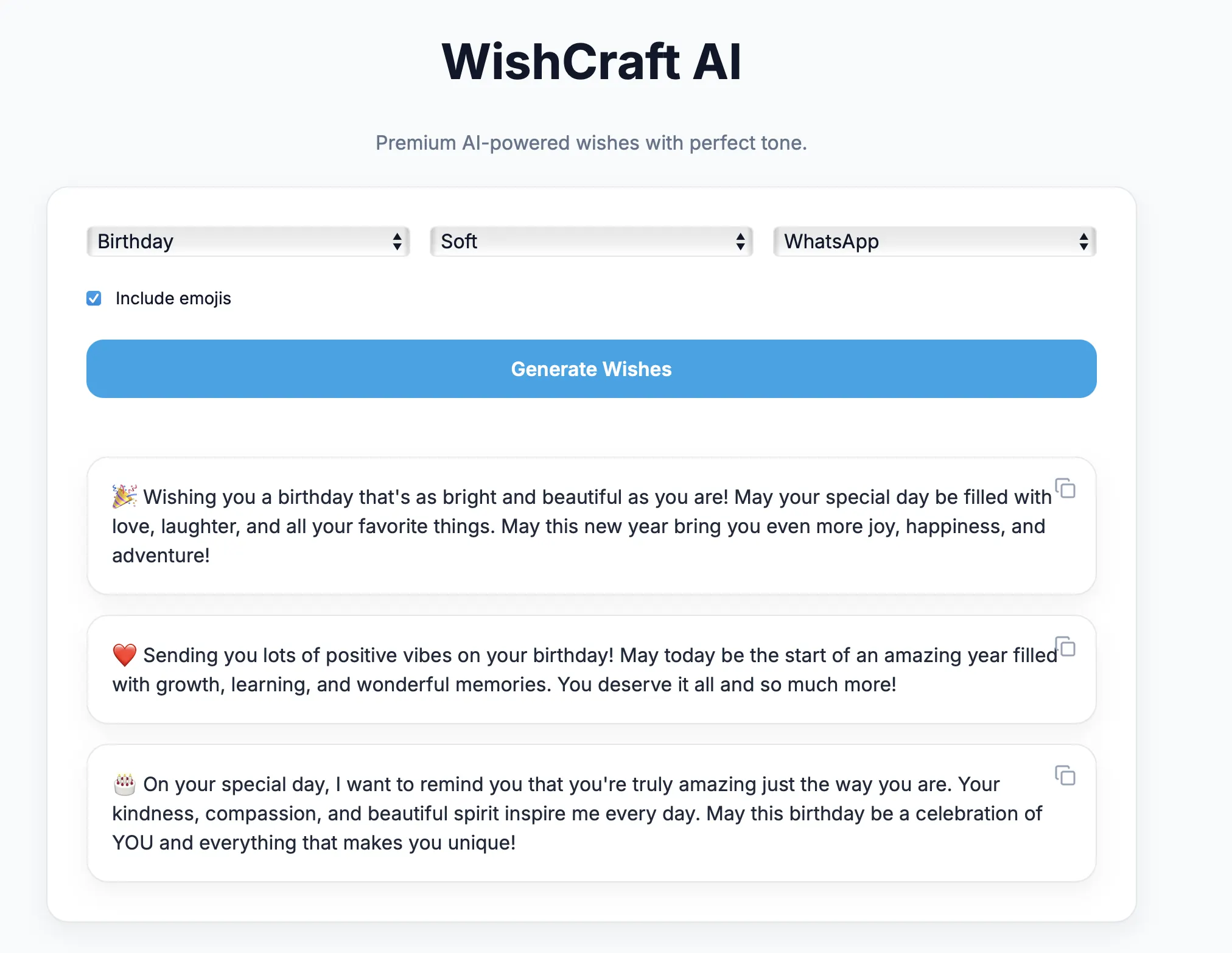

What Really Matters When Building Production-Ready AI Applications

🧩 Series Recap

This marks the final post in our 5-part journey of building a local LLM–powered AI Wish Generator.

Let’s quickly recap what we built:

- Part 1: Architecture & local LLM fundamentals

- Part 2: Backend development & prompt engineering

- Part 3: Modern Next.js frontend & UX design

- Part 4: Dockerization, Ollama & macOS challenges

- Part 5: AI Engineering Lessons from Building a Local LLM App

This post ties everything together.

🎯 Objective of the Final Part

In this article we will discuss:

- Real challenges faced while building the app

- Why LLM apps behave unpredictably

- Techniques to stabilize AI output

- Performance tuning strategies

- Streaming vs blocking responses

- When prompts fail and how to fix them

- When to introduce RAG

- Key AI engineering lessons

This is the difference between demo apps and production AI systems.

⚠️ Common Challenges in AI Application Development

Unlike traditional software, AI systems introduce non-determinism.

Even with the same input:

- Output wording changes

- Length varies

- Emojis behave inconsistently

- Tone may drift

This unpredictability is the core challenge of AI engineering.

🧠 Challenge 1: Unstable Output Format

Without strict rules, LLMs may:

- Ignore line breaks

- Merge multiple wishes

- Add explanations

- Change numbering styles

❌ Weak prompt

Write 3 birthday wishes.

✅ Stable prompt

Generate exactly 3 wishes.

Each must be multi-line.

Separate with a blank line.

Do not add headings.

Lesson: Prompt structure matters more than creativity.

🎭 Challenge 2: Emoji & Platform Drift

LLMs naturally mix emoji styles:

:tada: 🎉 ✨

This breaks platform realism.

Solution

- Explicit platform emoji rules

- Never allow mixed formats

- Limit emojis per line

This transformed output quality instantly.

🧠 Challenge 3: Emotional Sensitivity

Condolence messages are dangerous if mishandled.

Problems include:

- Overly cheerful tone

- Inappropriate emojis

- Generic phrases

Guardrails added

- Emojis disabled by default

- Softer language rules

- Shorter sentence limits

AI systems must respect emotional context.

⚡ Challenge 4: Latency Perception

Local LLM inference can take:

- 5–10 seconds on CPU

Users perceive this as slowness.

Even when technically acceptable, UX suffers.

🌊 Streaming vs Blocking Responses

Blocking (traditional)

User clicks generate

Wait 8 seconds

Response appears

Feels slow.

Streaming (recommended)

User clicks generate

Text appears immediately

Tokens stream live

Feels intelligent and fast.

Streaming improves perceived performance by 60–70%.

🧠 Streaming Architecture

Ollama (tokens)

↓

FastAPI StreamingResponse

↓

Next.js incremental render

This is how ChatGPT works internally.

⚙️ Performance Optimization Techniques

Backend

- Use smaller models when possible

- Limit max tokens

- Use temperature 0.6–0.8

- Cache static prompts

Frontend

- Disable UI while generating

- Show loading indicators

- Animate text appearance

Perception matters more than raw speed.

🧩 When Prompt Engineering Is Not Enough

Prompts alone fail when:

- User data is personalized

- Context exceeds token limit

- Knowledge must be factual

- Responses must reference documents

This is where RAG becomes necessary.

📚 When to Introduce RAG

Use Retrieval-Augmented Generation if:

- You need memory

- You use documents

- You need factual grounding

- You require traceability

Do not use RAG for:

- Greetings

- Creative writing

- Generic content

Prompt-only systems are faster and simpler.

🧠 Key Engineering Insight

Most AI apps do not fail because of the model.

They fail because of:

- Poor prompt governance

- Weak UX feedback

- No guardrails

- Unstable formatting

- Lack of streaming

AI engineering is system engineering.

🏗️ Production Checklist

Before shipping an AI app:

- ✅ Deterministic prompt structure

- ✅ Platform formatting rules

- ✅ Input validation

- ✅ Timeout handling

- ✅ Streaming support

- ✅ Clear UX feedback

- ✅ Containerized runtime

- ✅ Linux-based deployment

🧠 What This Project Teaches

By building this app you learned:

- Local LLM orchestration

- Prompt engineering patterns

- Frontend AI UX design

- Containerization of LLM runtimes

- macOS vs Linux realities

- Cloud deployment mindset

These skills directly translate to:

- AI copilots

- Enterprise chatbots

- Knowledge agents

- Workflow automation

- RAG systems

🚀 From Demo App to AI Engineer

If you can:

- Control LLM behavior

- Enforce output contracts

- Design stable prompts

- Stream responses

- Deploy locally and in cloud

You are no longer experimenting.

You are engineering AI systems.

🧭 Final Architecture Summary

Next.js UI

↓

FastAPI Orchestrator

↓

Prompt Intelligence Layer

↓

Ollama Runtime

↓

Local LLaMA Model

This architecture scales naturally to:

- Agents

- MCP servers

- RAG pipelines

- Tool-using AI

✨ Final Thoughts

AI engineering is not about chasing models.

Models will change.

Frameworks will evolve.

But these fundamentals remain:

- Structured prompts

- Deterministic outputs

- Strong UX

- Clear architecture

- Deployment discipline

Master these — and any model becomes usable.