📦 Dockerizing Local LLMs with Ollama – Challenges on macOS (Part 4)

Sat Jan 17 2026

Containerizing FastAPI and Ollama — Real‑World Challenges, Especially on macOS

🧠 Series Context

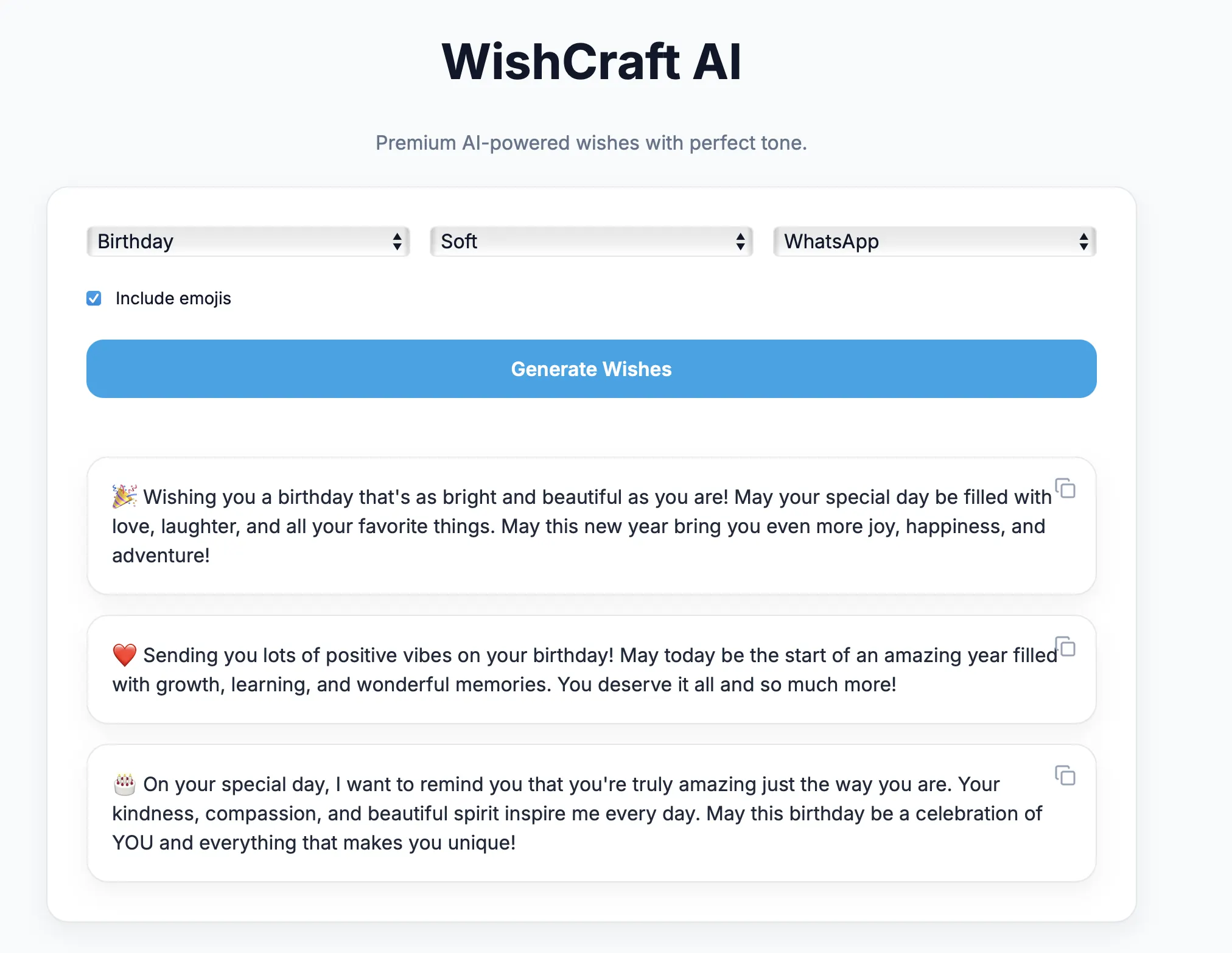

This is Part 4 of our AI engineering series where we are building a local LLaMA-powered AI Wish Generator.

So far we have covered:

- Part 1: Architecture & local LLM fundamentals

- Part 2: Backend development & prompt engineering

- Part 3: Modern Next.js frontend & device-agnostic UI

In this post we move into one of the most misunderstood areas of AI development:

Containerizing local LLMs and preparing them for cloud deployment.

📘 Series Roadmap

| Part | Topic

| ---------- | ----------------------------------

| Part 1 | Architecture & fundamentals

| Part 2 | Backend & prompts

| Part 3 | Frontend & UX

| Part 4 | Dockerization & cloud shipping.

| Part 5 | AI Engineering Lessons from Building a Local LLM App

🎯 Objective of Part 4

By the end of this post you will understand:

- Why Docker is essential for AI apps

- How to containerize FastAPI cleanly

- How to containerize Ollama + LLaMA

- Why macOS causes unique problems

- How to fix networking, IPv6 and timeout issues

- How the same setup works flawlessly on Linux

- How this architecture can be shipped to the cloud

🧠 Why Containerization Matters in AI

AI systems are not single applications.

They consist of:

- Backend services

- LLM runtimes

- Model weights (GBs)

- Networking layers

- GPU / CPU dependencies

Docker provides:

- Environment consistency

- Easy deployment

- Version control for infrastructure

- Predictable runtime behavior

Without containers, AI apps become fragile.

🧱 Container Architecture

┌──────────────────────────┐

│ Next.js Frontend │

│ (optional) │

└────────────▲─────────────┘

│

┌────────────┴───────────-─┐

│ FastAPI Backend │

│ (Docker) │

└────────────▲─────────────┘

│ HTTP

┌────────────┴───────-─────┐

│ Ollama LLM │

│ (Docker container) │

└────────────▲─────────────┘

│

┌────────────┴-────────────┐

│ LLaMA Model Files │

│ (volume) │

└──────────────────────────┘

Each component runs independently.

🐳 Dockerizing the FastAPI Backend

Dockerfile

FROM python:3.11-slim

WORKDIR /app

RUN apt-get update \

&& apt-get install -y curl \

&& rm -rf /var/lib/apt/lists/*

COPY requirements.txt .

RUN pip install --no-cache-dir -r requirements.txt

COPY app ./app

EXPOSE 8000

CMD ["uvicorn", "app.main:app", "--host", "0.0.0.0", "--port", "8000"]

Why this works well

- Lightweight image

- Predictable Python version

- No OS dependency

- Clean startup

🦙 Dockerizing Ollama

Ollama provides an official image:

image: ollama/ollama:latest

This container includes:

- Ollama runtime

- Model manager

- REST API server

Models are stored using volumes.

📄 Docker Compose Setup

version: "3.9"

services:

ollama:

image: ollama/ollama:latest

container_name: ollama

ports:

- "11434:11434"

volumes:

- ollama_data:/root/.ollama

environment:

- OLLAMA_HOST=0.0.0.0:11434

api:

build: .

container_name: wish-api

ports:

- "8000:8000"

depends_on:

- ollama

volumes:

ollama_data:

This setup works perfectly on Linux servers.

⚠️ The macOS Problem (Important)

If you are developing on macOS, you will almost certainly face issues.

Common errors

- Connection timeout to Ollama

curl http://ollama:11434fails inside container- Ollama listening on

[::]:11434 - IPv6 binding only

- Docker DNS resolution failures

Example log:

Listening on [::]:11434

This single line causes hours of debugging.

❌ Why Ollama Breaks on macOS Docker

macOS uses:

- Docker Desktop

- Linux VM under the hood

Problems arise because:

- Ollama binds to IPv6 (

::) - Docker bridge networking uses IPv4

- IPv6 → IPv4 routing fails silently

Result:

Containers cannot talk to Ollama even though it is running.

✅ Workaround That Actually Works

Instead of container-to-container communication:

Run Ollama on the host

ollama serve

Then let Docker containers access it via:

host.docker.internal

Backend configuration

OLLAMA_URL = "http://host.docker.internal:11434/api/generate"

This bypasses Docker networking completely.

🧠 Why This Is Not a Hack

This pattern is officially documented by:

- Docker Desktop

- LangChain

- LlamaIndex

- Ollama community

It is the recommended approach on macOS.

🐧 Why Linux Has No Issues

On Linux:

- Docker runs natively

- No VM layer

- Proper IPv4 networking

- GPU access supported

Containerized Ollama works perfectly.

This is why cloud deployments are stable.

☁️ Shipping to Cloud

Once containerized, deployment becomes trivial.

Supported platforms:

- AWS EC2

- Azure VM

- GCP Compute Engine

- DigitalOcean

- Hetzner

Steps:

docker compose up -d

ollama pull llama3

That’s it.

🚀 GPU Acceleration (Optional)

On Linux servers with NVIDIA GPUs:

runtime: nvidia

environment:

- NVIDIA_VISIBLE_DEVICES=all

This enables:

- Faster inference

- Larger models

- Lower latency

macOS does not support GPU containers.

🔄 Final Deployment Architecture

Internet

↓

NGINX / Load Balancer

↓

FastAPI (Docker)

↓

Ollama (Docker)

↓

LLaMA Model

The same design scales horizontally.

⚡ Key Engineering Learnings

- Dockerizing AI apps is harder than web apps

- LLM runtimes behave differently across OS

- macOS is for development, not hosting

- Linux is the true AI deployment platform

Understanding this saves days of debugging.